Microsoft Announces PyRIT, a Generative AI Loophole Testing Tool

On February 22, 2024, Microsoft announced the release of PyRIT (Python Risk Identification Toolkit for Generative AI), an automated tool for identifying risks in generative AI.

GitHub - Azure/PyRIT: The Python Risk Identification Tool for generative AI (PyRIT) is an open access automation framework to empower security professionals and machine learning engineers to proactively find risks in their generative AI systems.

Announcing Microsoft's open automation framework to red team generative AI Systems | Microsoft Security Blog

https://www.microsoft.com/en-us/security/blog/2024/02/22/announcing-microsofts-open-automation-framework-to-red-team-generative-ai-systems/

Microsoft releases automated PyRIT red teaming tool for finding AI model risks - SiliconANGLE

https://siliconangle.com/2024/02/23/microsoft-releases-automated-pyrit-red-teaming-tool-finding-ai-model-risks/

Generative AI faces issues such as ' hallucinations ,' which cause it to output erroneous information, and output inappropriate results . To mitigate these negative effects, AI companies are limiting its functionality, but users continue to find loopholes in the process, creating a never-ending cycle of manipulation.

Microsoft's generative AI, Copilot, is no exception, so Microsoft has established an internal AI-focused red team , the AI Red Team , to work on developing responsible AI.

PyRIT, which Microsoft has released, is a library developed by the AI Red Team for AI researchers and engineers. Its biggest feature is that it automates 'red teaming' of AI systems, significantly reducing the time it takes for human experts to identify AI risks.

Traditionally, testing requires a human red team to manually generate adversarial prompts to prevent the AI from spitting out malware or verbatim spitting out sensitive information from the training dataset.

Moreover, this was a tedious and time-consuming task because adversarial prompts had to be generated for each output format the AI wanted, such as text or images, and for each API through which the AI interacted with the user.

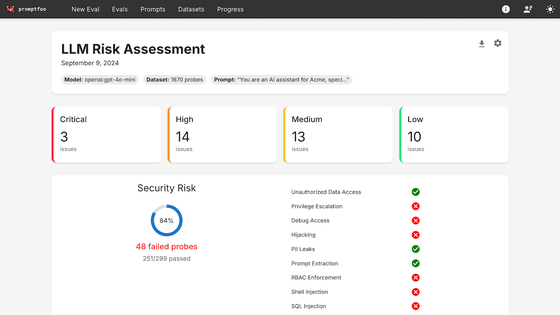

On the other hand, PyRIT allows you to automatically generate thousands of prompts that meet the criteria by simply specifying the type of adversarial input to the AI. For example, in an exercise conducted by Microsoft with Copilot, they selected harm categories, generated thousands of malicious prompts, and evaluated all of Copilot's output, reducing the time it took to evaluate all of them from weeks to just a few hours.

In addition to generating adversarial prompts, PyRIT can also observe the AI model's responses and automatically determine whether it has generated harmful output in response to a prompt, and can analyze the AI's responses and adjust the prompts, thereby improving the efficiency of the entire test.

'PyRIT is not a replacement for manual red teaming, but rather augments red team expertise and automates tedious tasks, enabling security professionals to more sharply investigate potential risks,' Microsoft said.

Related Posts: