Major AI development companies such as OpenAI and Google announce that they will work on strengthening AI safety such as ``watermarking AI-generated content''

In recent years, AI technology that generates text, images, and sounds has advanced rapidly, and there are concerns that these AIs will be abused for crimes and the spread of misinformation. Meanwhile, seven major AI companies, OpenAI, Meta, Microsoft, Google, Amazon, Anthropic , and Inflection , promised to make ``voluntary efforts to reduce AI risks'' in accordance with the request of the US government.

FACT SHEET: Biden-Harris Administration Secures Voluntary Commitments from Leading Artificial Intelligence Companies to Manage the Risks Posed by AI | The White House

https://www.whitehouse.gov/briefing-room/statements-releases/2023/07/21/fact-sheet-biden-harris-administration-secures-voluntary-commitments-from-leading-artificial-intelligence-companies-to-manage-the-risks-posed-by-ai/

Meta, Google, and OpenAI promise the White House they'll develop AI responsibly - The Verge

https://www.theverge.com/2023/7/21/23802274/artificial-intelligence-meta-google-openai-white-house-security-safety

OpenAI, Google will watermark AI-generated content to hinder deepfakes, misinfo | Ars Technica

https://arstechnica.com/ai/2023/07/openai-google-will-watermark-ai-generated-content-to-hinder-deepfakes-misinfo/

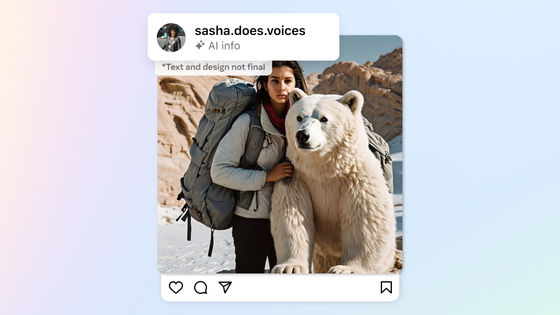

In recent years, the accuracy of content generated by AI has improved rapidly, making it possible for anyone to generate images of such quality that they can be mistaken for real images. In March 2023, Elliot Higgins, founder of the British investigative journalism agency Bellingcat , used Midjourney, an image generation AI, to generate a ``fake image in which former President Donald Trump was arrested'' and published it on Twitter. Midjourney, who saw this situation seriously, imposed a ban on Mr. Higgins for violating the terms of service ``prohibiting the use of offensive or other abusive images or text prompts against others''.

A person who generated a fake ``President Trump was arrested'' image using the image generation AI ``Midjourney V5'' is banned from use - GIGAZINE

In addition, it has been reported that the number of cases of using AI-made clone voices for `` It's me fraud '' that deceives money by making a phone call pretending to be an acquaintance is increasing rapidly, and that cyber criminals are using chat AI to develop business email fraud.

Cyber criminals are using chat AI for business email fraud - GIGAZINE

As you can see from the above examples, advances in AI technology have the potential to greatly reduce human labor, but they also have the potential to be exploited for deepfakes and crimes. As a result, regulatory bodies in each country have begun to formulate rules for managing and regulating AI, and the EU is expected to approve a `` bill regulating the use of AI '' in 2023, and the White House Office of Science and Technology Policy (OSTP) will announce a draft of the `` AI Bill of Rights '' in 2022 to protect citizens from harm and discrimination caused by AI.

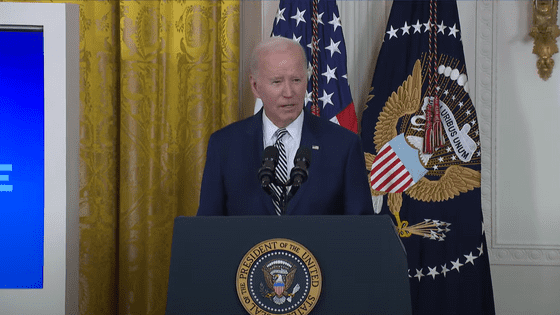

Newly on July 21, 2023, the White House convened seven major AI development companies , OpenAI, Meta , Microsoft, Google, Amazon, Anthropic, and Inflection, to ``safely and highly transparently develop AI technology.

``The companies developing these new technologies have a responsibility to ensure the safety of their products,'' the White House said in a statement.

Seven major AI development companies will work on the following items.

・ Conduct internal and external security tests before releasing AI systems to assess various AI risks and social impacts.

・Share information on AI risk management with industry, government, civil society, and academia.

• Invest in cybersecurity to protect model weights.

・Facilitate the discovery and reporting of vulnerabilities in AI systems by third parties.

・Develop mechanisms such as watermarking AI-generated content so that users can recognize AI-generated content.

・Publicly announce the capabilities and limitations of AI systems and areas of appropriate and inappropriate use.

・Prioritize research on social risks such as the promotion of discrimination and prejudice and invasion of privacy that AI systems may cause.

・Develop advanced AI systems to address major social issues.

Ars Technica, a technology media company, said, ``Seven AI development companies are working to develop technology to clearly watermark AI-generated content.This will allow AI-generated text, video, audio, and images to be shared more securely without misleading about the authenticity of the content. In a blog that reported on the agreement, OpenAI reports that it will develop a watermarking system that shows the origin of content, and will also work on developing tools or APIs to determine whether content has been generated by AI.

It should be noted that this agreement was concluded voluntarily and has no legal binding force.

Related Posts:

in Software, Web Service, Security, Posted by log1h_ik