New York City enforces AI regulation law that obliges companies that use AI in recruitment activities to ``notify and explain the use of AI''

As the world moves to formulate regulations on artificial intelligence (AI), New York City is ahead of other regions in preparing legislation to regulate the use of AI in hiring and hiring decisions, according to The New York. The Times reports.

New York City Moves to Regulate How AI Is Used in Hiring - The New York Times

Roy Kaufman on LinkedIn: A Hiring Law Blazes a Path for AI Regulation

https://www.linkedin.com/feed/update/urn:li:share:7067470918482030592

New York City wants to regulate the use of AI in hiring - US Today News

https://ustoday.news/new-york-city-wants-to-regulate-the-use-of-ai-in-hiring/

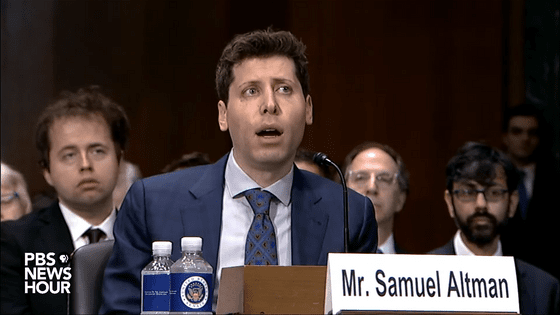

The New York City Department of Consumer and Worker Protection plans to implement a law passed in 2021 that 'regulates the use of high-risk technology in decisions such as hiring and promotions' in July 2023. It became clear.

The law requires companies that use AI software in hiring to notify candidates that automated systems are being used, and requires companies to be independently audited annually for technology bias. I have. In addition, the general public can 'inquire and find out what data companies collect and analyze.' Companies that violate the law will be fined.

New York City's AI regulation law has been criticized even before it came into force, with public interest activists saying that it is not a sufficient regulation, while economic organizations say that it is not a realistic regulation. Opposite opinions are erupting.

Julia Stojanovic, director of

In addition, Stojanovic prefaces that he fears there are loopholes in New York City's AI regulation law, saying that 'it's much better than no law' and that 'unless you're trying to regulate it, learn how to do it'. I can't,' he said, noting that he doesn't know what kind of problems will arise unless he actually starts regulation.

The AI regulation law applies to companies with employees in New York City, and labor experts are concerned that it 'could impact national practices.'

At least four states—California, New Jersey, New York, Vermont—and the District of Columbia have also revealed they are working on legislation to regulate the use of AI in employment. Illinois and Maryland have also enacted legislation restricting the use of certain AI technologies for workplace surveillance and occupational screening.

Alexandra Givens, director of Center for Democracy & Technology, a political and civil rights organization, said, ``It was supposed to be a groundbreaking bill, but it became a watered-down law and has no effect,'' referring to New York City's AI regulation law. . Director Givens explains why the AI regulation law has been watered down by defining ``automated hiring decision tools'' as ``technology that is introduced to substantially support or replace discretionary decision-making''. Because there is,” he explains. Givens pointed out that New York City's AI regulation law is very narrow in interpretation due to the language above.

Givens also criticized the AI regulation law as limiting the types of groups that could be tried for unfair treatment. Specifically, although the AI regulation law includes prejudices such as gender, race, and ethnicity, it is a problem that it does not include discrimination against older workers and people with disabilities.

Givens said, 'My biggest concern is that this [New York City's AI regulation law] will become a template for AI regulation legislation that prevails at the national level, even though we should be asking policy makers to do more. It is.'

According to New York City officials, the city's AI regulation law has been 'narrowed in scope to make it enforceable.' The New York City Council and the New York City Department of Consumer and Labor Protection, which are instrumental in enforcing New York City's laws, have consulted with many stakeholders, including public interest activists and software developers, to develop the law. ``New York City's aim is to weigh the trade-offs between innovation and potential harm,'' said an official.

However, the Business Software Alliance , an industry group that also includes Microsoft, SAP and Workday, said it was 'impossible' to develop independent AI testing requirements because of the AI regulatory law. The Business Software Alliance explains this because 'the test environment is still evolving and lacks connections with planning and specialized regulatory authorities.'

In applications that can affect your life, such as hiring or hiring, you have the right to be told how the decision was made. However, AI tools such as ChatGPT are becoming increasingly complex, and some experts say they may fall short of the goal of 'explainable AI'.

“The focus is on the results of algorithms, not how they work,” said the Responsible AI Institute, which develops certifications for the safe use of AI applications in the workplace, health care and finance. Executive Director Ashley Kasoban said.

Related Posts:

in Note, Posted by logu_ii