Google, Apple, Amazon, Microsoft's AI avoids labeling gorillas even after eight years have passed since Google's AI classified blacks as ``gorillas''

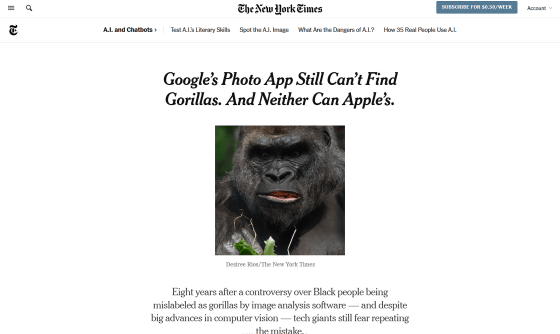

In 2015, an incident occurred in which Google's photo application, Google Photos, labeled black photos as `` gorillas '', sparking a controversy over `` AI bias ''. At the time of writing the article eight years after this incident, AI technology has made great progress, but it is still said that image recognition AI such as Google, Apple, Amazon, and Microsoft avoids labeling it as 'gorilla'. , reported by the New York Times.

Google's Photo App Still Can't Find Gorillas. And Neither Can Apple's. - The New York Times

Google's Photos App is Still Unable to Find Gorillas | PetaPixel

https://petapixel.com/2023/05/22/googles-photos-app-is-still-unable-to-find-gorillas/

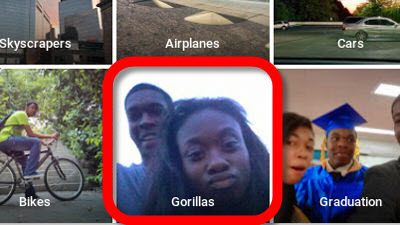

Google Photos has a function of `` AI labels uploaded images '', but in May 2015, software developer and black Jacky Alciné said `` gorilla '' in a photo of himself and his friends. reported that it had been labeled. The incident is said to have been caused by the AI's inability to successfully identify blacks and gorillas because the dataset used to train the AI system did not contain enough black images.

Developer apologizes for incident in which Google Photos recognized black people as gorillas - GIGAZINE

Eight years after the incident, The New York Times checked to see if a major technology company's image recognition AI had fixed the problem. First, he selected 44 images of people, animals, and daily necessities, and used Google Photos to search for pictures of 'cats' and 'kangaroos.' About. Google Photos worked well for other animals, but a search for 'gorilla' yielded no matching images, and primates such as baboons, chimpanzees, orangutans, and other monkeys also failed on Google Photos. I'm sorry.

Next, a similar test was conducted with Apple's photo app, but although most animal photos can be accurately identified, only primates including gorillas fail to identify. A photo search using Microsot's OneDrive gives no answers for all animals, and Amazon Photos returns unhelpfully broad results, such as a search for 'gorilla' showing pictures of all primates. He said. In addition, it seems that Google and Apple's AI was able to identify only ' lemur ' among primates.

According to the New York Times, ``Alcine said that society puts too much trust in technology, knowing that Google has not yet fully resolved the problem.''

The survey showed that Google Photos can't label 'gorillas', but this doesn't mean that 'AI can't distinguish blacks and gorillas even with the latest science and technology.' Fearing public criticism for AI biases and malfunctions, technology companies sometimes deliberately disable features in their products and services for safety reasons, even if they are technically possible.

The New York Times wrote, 'Google, which owns Android, which powers most of the world's smartphones, has made primates visually ugly for fear the system will make an unpleasant mistake and label people as animals.' 'We have made the decision to turn off the ability to search. Apple, whose technology performed similarly to Google in this test, seems to have disabled the ability to find monkeys and apes as well.' increase.

In fact, the New York Times, in an experiment using Google Lens, which can perform searches from captured images, avoids labeling images of gorillas and chimpanzees by AI, but appropriately identifies images of gorillas as ``similar images''. is displayed. This suggests that Google's AI can properly identify gorillas, but dares to turn off the function 'label images'.

Margaret Mitchell, who was co-leader of the Ethical AI team at Google's AI research division Google Research, was a person who joined the company after the 2015 incident, but in cooperation with the Google Photos team. He said he agreed with the decision to 'remove the 'gorilla' label from Google Photos, at least for a while.'

``We have to think about how many people need to be labeled as gorillas, and the problem of perpetuating harmful stereotypes,'' Mitchell said. The benefits do not outweigh the potential harm from doing it wrong.'

The sensitivity of big tech companies like Google to AI biases is also influenced by the fact that billions of people around the world use Google services. Even if it's a rare glitch that only 1 in 1 billion users face, once it happens, it will go viral on the Internet and cause a big fuss.

But Vicente Ordóñez , an associate professor at Rice University and a researcher in image recognition programs, said, 'It's important to solve these problems. If they aren't solved, how can we trust this software in other scenarios?' Isn't it?', He argued that the problem should be solved rather than covered.

Related Posts:

in Software, Web Service, Posted by log1h_ik