Google announces that it will update image search, Google Maps, and Google Translate with AI

On February 8, 2023, Google announced the conversational AI service

Google AI makes Search more visual through Lens, multisearch

https://blog.google/products/search/visual-search-ai/

New Google Maps features including immersive view, Live view updates, electric vehicle charging tools and glanceable directions.

https://blog.google/products/maps/sustainable-immersive-maps-announcements/

New features make Translate more accessible for its 1 billion users

https://blog.google/products/translate/new-features-make-translate-more-accessible-for-its-1-billion-users/

Google is still drip-feeding AI into search, Maps, and Translate - The Verge

https://www.theverge.com/2023/2/8/23589886/google-search-maps-translate-features-updates-live-from-paris-event

Google will hold a presentation in Paris on February 8, 2023 to announce the conversational AI service 'Bard', which will be a rival of ChatGPT equipped with LaMDA technology, and use AI for various Google services. announced an update.

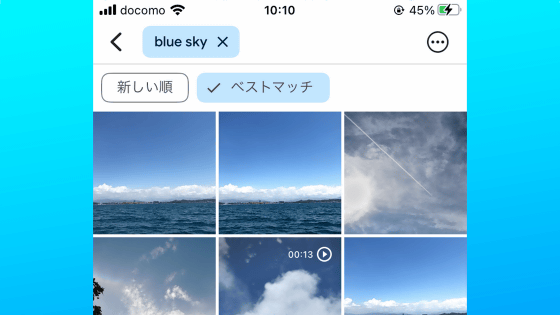

◆ Google Lens update

At the time of article creation, you can search by entering photos and images taken in the search bar. However, with this announcement, it was announced that by using Google Lens, you will be able to search for images and videos being displayed without switching from the site or app you are viewing. This feature will be coming to Android in the coming months.

In the coming months, we're introducing a ✨major update ✨ to help you search what's on your mobile screen.

—Google Europe (@googleeurope) February 8, 2023

You'll soon be able to use Lens through Assistant to search what you see in photos or videos across websites and apps on Android. #googlelivefromparis pic.twitter.com/UePB421wRY

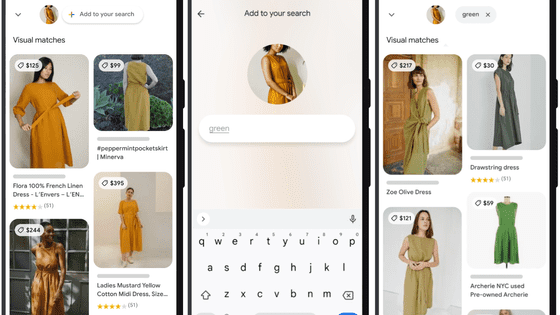

In addition, the multi-search function that allows you to search for images and text at the same time is available in the United States or the United Kingdom at the time of article creation, but the function has been expanded further, using AI to search for vegetarian food in nearby Chinese restaurants. It corresponds to the location information such as 'Narrow down and display only the stores that offer'. This feature will roll out globally in the coming months.

Have you already tried multisearch in the Google app? It allows you to take a pic and ask a question to find what you want.

—Google Europe (@googleeurope) February 8, 2023

And soon, you'll be able to add 'near me'????to your image to find what you're looking for nearby, like a local snack. ???? #googlelivefromparis pic.twitter.com/SPLFICUoFd

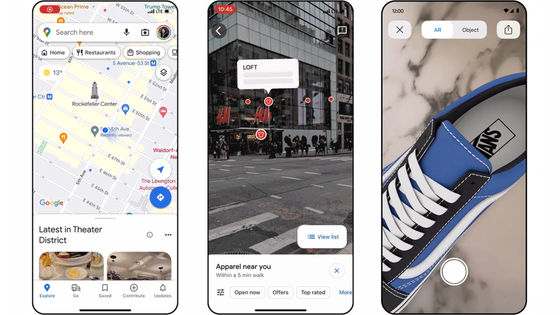

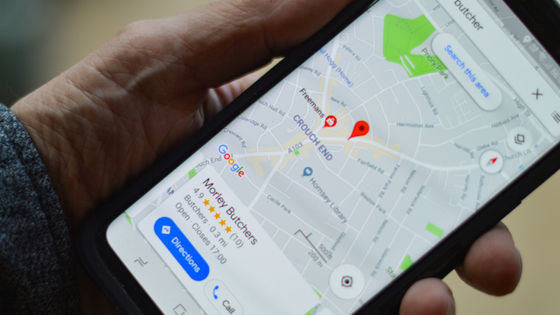

◆ Update Google Maps

A feature called 'Immersive View' will roll out in London, Los Angeles, New York, San Francisco and Tokyo from February 8th. Immersive View fuses billions of street views and aerial imagery to create a digital model of the city. It is also possible to superimpose weather, traffic conditions, congestion conditions, etc. on the digital model.

Are you the sort of person who needs to get the feel of somewhere before you commit?

—Google Europe (@googleeurope) February 8, 2023

With immersive view on Google Maps, you can see what a neighborhood is like before you even set foot there????

✨ Coming to more cities in the next few months ✨ #googlelivefromparis pic.twitter.com/VPvqHP25ai

In order to create these digital models, an advanced AI technology called `` Neural Radiance Field (NeRF) '' is used to convert ordinary images into 3D representations. By using NeRF, realistic expressions such as lighting, textures, and backgrounds are possible.

In addition, by using AI and augmented reality (AR) together, the 'indoor live view function' that overlays directions on the camera screen and leads to buildings, restaurants, etc. has been expanded to more than 1,000 airports and stations, including Tokyo. increase.

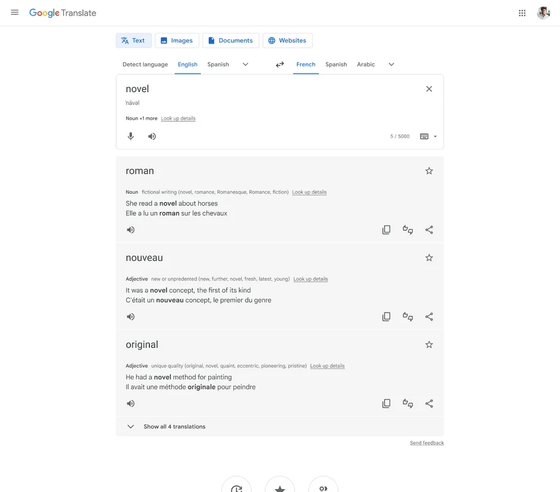

◆ Update Google Translate

Google Translate has been enhanced to accurately translate phrases, idioms, and appropriate words according to your intention when translating English, French, Japanese, etc.

In addition, the input screen of the Google Translate app has been enlarged, making it easier to access by inputting by voice and translating from images. This feature is available only for Android at the time of article creation, but will be available for iOS within a few weeks.

In addition, image translation by AI has been enhanced as an Android-only function. A function to translate the displayed text using the device's camera and display it naturally on the original image using the AR function will be deployed.

Related Posts:

in Web Service, Posted by log1r_ut