Google releases a function to read out food information using machine learning just by turning the smartphone

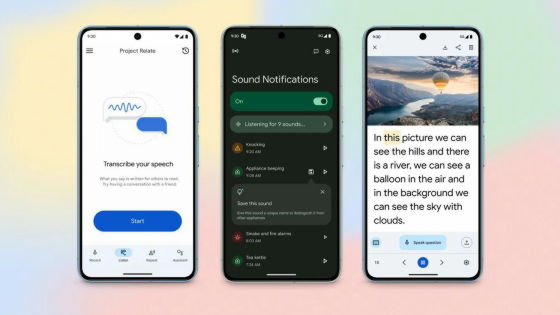

Google has added a 'food label mode' that reads the label name just by pointing the smartphone's camera at food to the 'Lookout' app for the visually impaired. On-device machine learning is used for label identification, and the design is low latency and network independent.

Google AI Blog: On-device Supermarket Product Recognition

'Lookout' released by Google in 2019 is an Android application that supports the visually impaired by detecting the surrounding environment to notify the existence of objects and reading characters. Initially released, it was distributed only in the North American region, but at the time of article creation, Japanese users can also install Lookout from Google Play.

Google announces an application ``Lookout'' that accurately conveys surrounding information as ``eyes'' instead of visually impaired people-GIGAZINE

For visually impaired people, it is difficult to distinguish food items only by labels printed on boxes, cans, bottles, etc. of the same shape, but Lookout has a new function to solve such difficulties. 'Food label' mode. In Lookout's food label mode, users simply point their smartphone's camera at the food and Lookout will identify it and give you a voice with the brand name and size.

The food label mode is realized by 'on-device' machine learning that performs machine learning on a smartphone or IoT device alone instead of in the cloud. On-device machine learning has the merit that it is low latency and does not depend on the network, compared with machine learning by cloud. Google has installed the on-device machine learning chip '

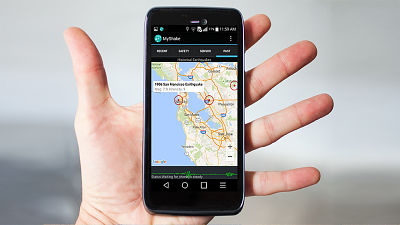

At the time of article creation, it can be installed in Japan, so I will use Lookout's food label mode. First, access the following URL from your smartphone. Lookout has more than 2GB of RAM and is available for smartphones with Android version 6.0 and above.

Lookout by Google-Apps on Google Play

https://play.google.com/store/apps/details?id=com.google.android.apps.accessibility.reveal&hl=en

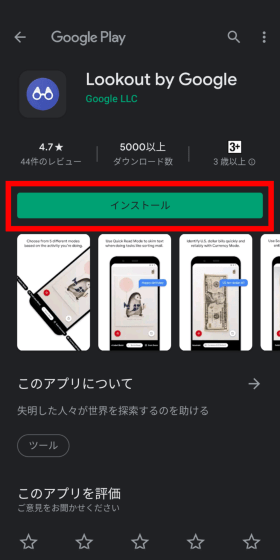

When Google Play starts, tap 'Install'.

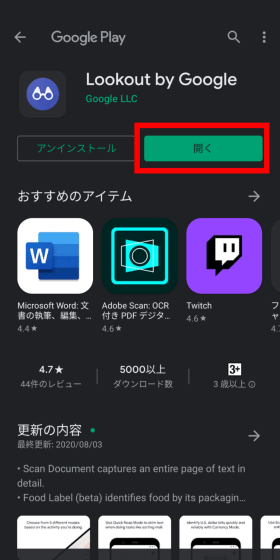

When installation is complete, tap 'Open'.

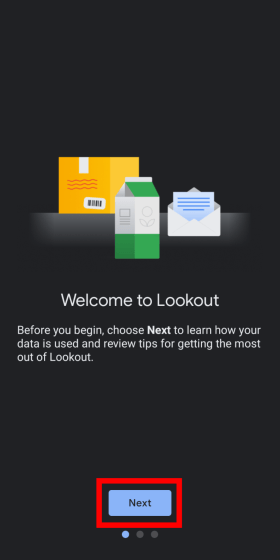

Lookout starts, so tap 'Next'. Japanese is not supported at the time of article creation.

Tap 'I agree' to agree to the data collection.

Tap 'Get started'.

It is possible to install Lookout itself in Japan, but it seems that it has not been optimized for Japan yet. Tap 'OK' to proceed.

In food label mode, the food label is recognized by pointing the camera at the front of the barcode or food. Tap 'OK'.

Lookout does on-device machine learning, so you need to download the dataset in advance. The size of the data to download is 250MB. Tap 'Download' to download the data.

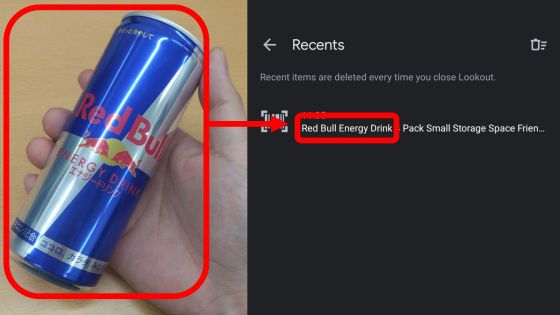

Once the data was downloaded, the camera was ready to read the food label. When I pointed the camera at Red Bull, which had the same package design in Japan and overseas, I read the product name as 'Red Bull Energy Drink'.

You can pause and resume scanning with the button on the left, and check the history of the scanned food with the button on the right.

When I checked the history, the product name of Red Bull was properly recorded in the history.

I tried pointing the camera at food for domestic use, but there was no reaction.

Lookout's food label mode will enable a variety of new shopping experiences, including allergen sources and nutrition information, customer ratings, product comparisons, and price tracking. Google further explores the capabilities of the application and comments that it will continue its research to improve the quality and robustness of the underlying on-device model.

Related Posts: