An American company has released the 'Laguna XS.2,' a high-performance, locally-operating open-source model. Can it compete with the Chinese companies that are making great strides in the open-source market?

Poolside, an American AI development company, released its AI models ' Laguna M.1 ' and ' Laguna XS.2 ' on April 29, 2026. Of these, Laguna XS.2 is publicly available as an open model and boasts performance exceeding Google's Gemma 4.

Introducing Laguna XS.2 and Laguna M.1 — Poolside

Laguna XS.2 and M.1: A Deeper Dive — Poolside

https://poolside.ai/blog/laguna-a-deeper-dive

Laguna M.1 is a MoE model with a total of 225 billion parameters and 23 billion active parameters. It uses a dataset of 30 trillion tokens for training, and training is expected to be completed by the end of 2025. It is not an additional training of any base model, but a proprietary model developed by Poolside, including pre-training.

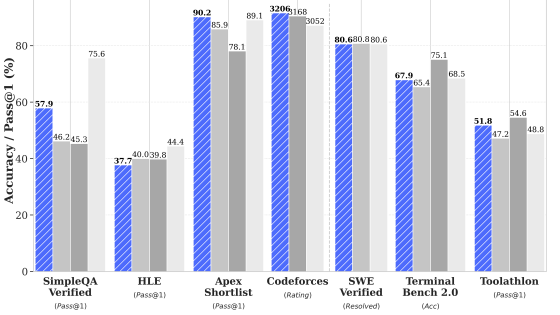

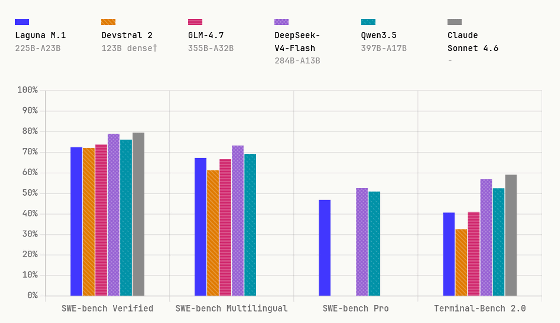

The graph below compares the performance of 'Laguna M.1 (225B-A23B)', 'Devstral 2 (123B)', 'GLM-4.7 (355B-A32B)', 'DeepSeek-V4-Flash (284B-A13B)', 'Qwen3.5 (397B-A17B)', and 'Claude Sonnet 4.6'. While it surpasses the Devstral 2's score, it falls short of the Chinese-made models 'GLM-4.7', 'DeepSeek-V4-Flash', and 'Qwen3.5'.

Laguna XS.2 is a MoE model with a total of 33 billion parameters and 3 billion active parameters. The dataset size is 30 trillion tokens, the training data size is 30 trillion tokens, and the training time was 5 weeks.

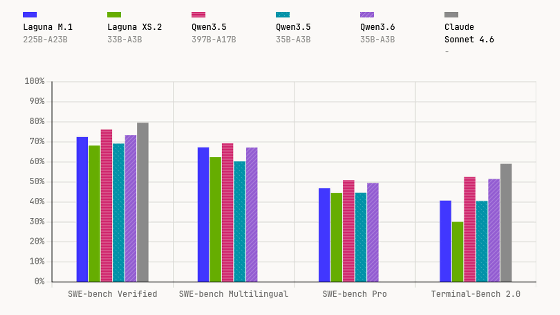

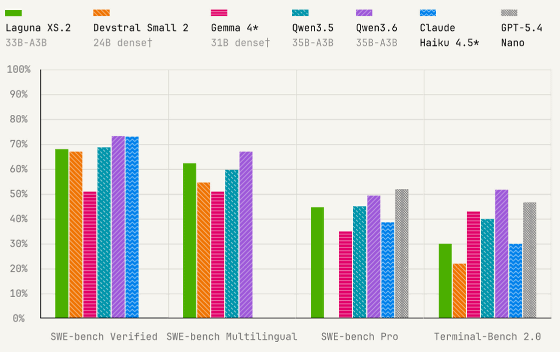

The benchmark results for 'Laguna XS.2 (33B-A3B)', 'Devstral Small 2 (24B)', 'Gemma 4 (31B)', 'Qwen3.5 (35B-A3B)', 'Qwen3.6 (35B-A3B)', 'Claude Haiku 4.5', and 'GPT-5.4 Nano' are as follows. Laguna XS.2 outperforms Qwen3.5 of the same size in some tests, but loses to Qwen3.6.

Laguna M.1 and Laguna XS.2 are available via API , and API usage is free for a limited time. Laguna XS.2 is also released as an open model. Poolside stated, 'We believe that Western countries need strong open models, and we want to contribute to the ecosystem,' emphasizing the need for open models to compete with Chinese AI companies.

Laguna XS.2 can be downloaded from the following link. Poolside developed Laguna XS.2 in collaboration with NVIDIA and has also released a quantized version using NVFP4. Furthermore, quantized versions using FP8 and INT4 are also available. All versions are licensed under the Apache License 2.0.

poolside/Laguna-XS.2 · Hugging Face

https://huggingface.co/poolside/Laguna-XS.2

poolside/Laguna-XS.2-FP8 · Hugging Face

https://huggingface.co/poolside/Laguna-XS.2-FP8

poolside/Laguna-XS.2-INT4 · Hugging Face

https://huggingface.co/poolside/Laguna-XS.2-INT4

poolside/Laguna-XS.2-NVFP4 · Hugging Face

https://huggingface.co/poolside/Laguna-XS.2-NVFP4

Additionally, MLX, an AI framework for Apple Silicon, also supports the execution of Laguna-XS.2.

Day-zero support for Laguna XS.2 in MLX🔥🚀 @poolsideai 's first open-weight model is now supported in MLX.

— Prince Canuma (@Prince_Canuma) April 28, 2026

33B total params, 3B activated, built for agentic coding, and running natively on Apple Silicon.

Huge thanks to team at Poolside for the early collaboration 🙌🏽

Heads up:… https://t.co/I7VdkGHy19

Related Posts:

in AI, Posted by log1o_hf