The speed at which vulnerabilities in 'Claude Mythos Preview' and 'GPT-5.4-Cyber' are discovered may be too fast for OSS maintainers to keep up with, potentially increasing the risk.

On April 7, 2026, Anthropic announced ' Claude Mythos Preview ,' an AI model with high cyberattack capabilities, and on April 14, 2026, OpenAI announced ' GPT-5.4-Cyber, ' an AI model with relaxed security restrictions. It has been pointed out that the emergence of these high-performance AIs could have a significant impact on the security of open-source software (OSS).

The “AI Vulnerability Storm”: Building a “Mythos-ready” Security Program | Lab Space

https://labs.cloudsecurityalliance.org/mythos-ciso/

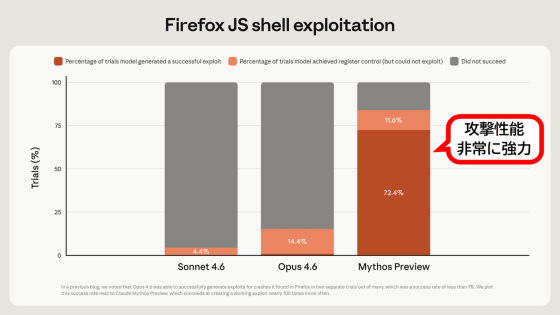

Claude Mythos Preview is an AI model that offers significantly improved inference capabilities compared to Claude Opus 4.6, and has been shown to have particularly high capabilities in 'exploiting vulnerabilities to launch cyberattacks.' Claude Mythos Preview is not available to the general public and is being provided to select organizations to improve their cybersecurity capabilities.

Anthropic develops 'Claude Mythos Preview,' an AI with extremely high cyberattack capabilities, and has also launched 'Project Glasswing,' which will provide a preview version to companies such as Microsoft and Apple - GIGAZINE

GPT-5.4-Cyber is a model that enhances cyberattack capabilities by relaxing the functional limitations imposed on GPT-5.4, and like Claude Mythos Preview, it is available to security researchers only.

OpenAI begins offering its cyberattack-focused AI 'GPT-5.4-Cyber' to security researchers, possibly as a countermeasure against Anthropic, which provides Claude Mythos - GIGAZINE

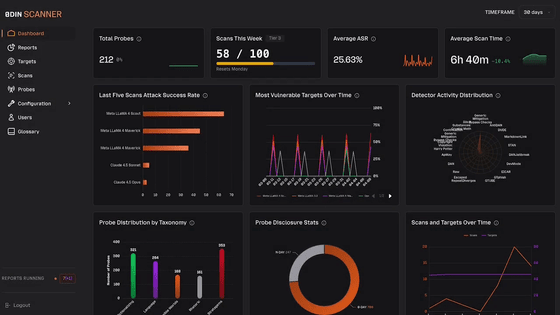

As of the time of writing, Claude Mythos Preview and GPT-5.4-Cyber are only available to select organizations, but Aaron El Haddad, CEO of security firm Echo , has pointed out the crisis facing open-source software, stating that if such high-performance AI models become widely used, security measures may not be able to keep up.

Many open-source software (OSS) projects are maintained by maintainers who have other full-time jobs, and it takes an average of 80 days from the time a vulnerability is discovered until a patch is provided to general users. On the other hand, high-performance AI models analyze entire OSS projects across the board and detect vulnerabilities one after another at a speed incomparable to conventional security scanning systems. Even when vulnerabilities are discovered, OSS maintainers have limited time, and a situation arises where they cannot keep up with the fixes.

Along with the announcement of Claude Mythos Preview, Anthropic has also launched a security project called 'Project Glasswing.' Project Glasswing aims to enhance defenses by providing OSS maintainers with access to Claude Mythos Preview, but El Haddad points out that 'Project Glasswing cannot overcome the fundamental limitations of OSS projects. Fixing vulnerabilities still requires re-verification and production deployment,' and warns that the emergence of high-performance AI will increase the period during which users are exposed to vulnerabilities.

Furthermore, Claude Mythos Preview has already had a significant impact on open-source projects, and the open-source scheduling tool 'Cal.com' has announced that it will be closing down its security-related components.

Open-source software developers decide to move to closed-source software due to security risks associated with AI - GIGAZINE

Furthermore, even before the release of Claude Mythos Preview, a situation arose where maintainers were exhausted by a large number of pull requests containing low-quality code generated by AI, leading to proposals for systems to manage the trustworthiness of contributors to projects.

The proliferation of AI is leading to an increase in low-quality code submissions to open-source projects, and a 'system for managing contributor trustworthiness' by the developer of Ghostty has also emerged - GIGAZINE

Regarding low-quality code generated by AI, the following article written by Yusukebe, the developer of the web framework ' Hono ,' explains in detail the problems such as 'having to manually review large amounts of code' and 'receiving pull requests that can only be described as spam.'

What is the problem with the AI Slop issue in open source software?

https://zenn.dev/yusukebe/articles/3fd5bc6ea341c9

Related Posts: