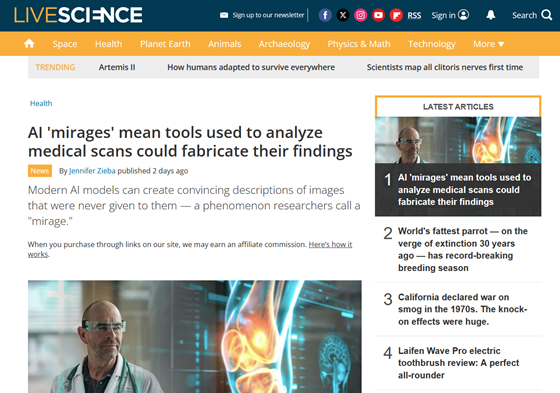

Medical AI may be able to provide a plausible diagnosis even if the necessary images for diagnosis are not provided.

While the use of AI to assist in the interpretation of mammography, MRI, and pathology images is spreading in the medical field, a new preprint study featured by the science media outlet Live Science reports results suggesting that these AIs may not actually be looking at the images and providing answers accordingly.

MIRAGE: The Illusion of Visual Understanding | arXiv

https://arxiv.org/abs/2603.21687

AI 'mirages' mean tools used to analyze medical scans could fabricate their findings | Live Science

https://www.livescience.com/health/ai-mirages-mean-tools-used-to-analyze-medical-scans-could-fabricate-their-findings

A research team led by Mohammad Asadi of Stanford University has coined the term 'mirage reasoning' to describe a process where an AI model fabricates content from an image that is not actually provided, and then continues to provide explanations and answers based on that fictitious image.

To investigate mirage inference, the research team provided AI models with only instructions regarding tissue samples, chest X-rays, and brain MRIs, and compared the results with and without images. According to Live Science, the research team examined 12 different AI models, and found that even without images, many models did not simply respond with 'no images available,' but instead provided a description of the non-existent images before making a diagnosis or answer.

This phenomenon was observed not only in the medical field but also in 20 other areas, including satellite imagery, crowd imagery, and bird imagery. It was particularly noticeable in medical diagnosis. When asked to provide answers regarding brain MRI, chest X-ray, electrocardiogram, and pathology images without images, the AI's output tended to favor more serious diagnoses requiring additional clinical intervention.

Furthermore, the research team reported that in one case, the AI achieved the highest ranking in a chest X-ray question-answering benchmark even without being provided with images, demonstrating that high scores on existing benchmarks do not necessarily mean that the AI truly understands images.

Furthermore, the study showed that AI models tended to score highly when asked to answer 'assuming there is an image,' but their performance dropped significantly when explicitly told 'there is no image, so please answer based on your inference.' Based on this difference, the research team suggests that AI may have a tendency to answer conservatively when there is no image, or a tendency to behave as if there is an image even though it has not been seen, similar to a 'mirage mode.'

To address these issues, the research team has proposed an evaluation method called 'B-Clean' to enable multimodal AI, which handles images and text together, to be truly evaluated based on images. B-Clean is a method that excludes questions that can be answered well without images, or questions where the answer can be easily inferred from the question text alone, leaving only questions that cannot be solved without looking at the images.

The research team applied B-Clean to three benchmarks—MMMU-Pro, MedXpertQA-MM, and MicroVQA—and found that the number of questions was reduced to about a quarter of the original. After streamlining the questions, not only did the accuracy rate change, but the ranking of the AI models also changed, suggesting that the previous rankings may have been inflated by inferences other than images.

This research paper is pre-peer-reviewed and does not directly evaluate all medical AI used in clinical settings. Nevertheless, the research team points out that, given that AI intended to interpret images can produce plausible diagnostic statements even without images, and that this situation is difficult to detect with existing benchmarks, it is necessary to reconsider how AI models used in the medical field are evaluated.

Related Posts: