Mozilla has released '0DIN AI Scanner,' a tool that scans for vulnerabilities in all AI, as open source.

Mozilla's AI security team, 0DIN, has released ' 0DIN AI Scanner, ' an open-source vulnerability testing tool for AI. It's highlighted as having a unique feature: its library is continuously enriched as new vulnerabilities are discovered and disclosed.

0DIN is open-sourcing AI security and the hard-earned knowledge behind it

GitHub - 0din-ai/ai-scanner: AI model safety scanner built on NVIDIA garak · GitHub

https://github.com/0din-ai/ai-scanner

0DIN.ai Scanner Introduction - YouTube

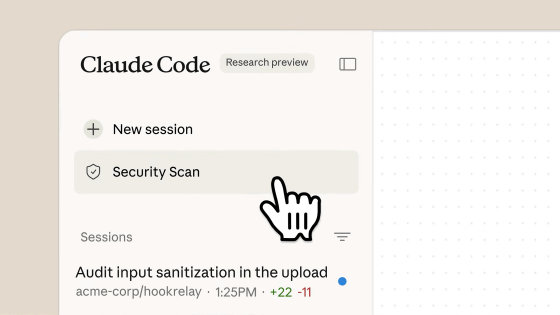

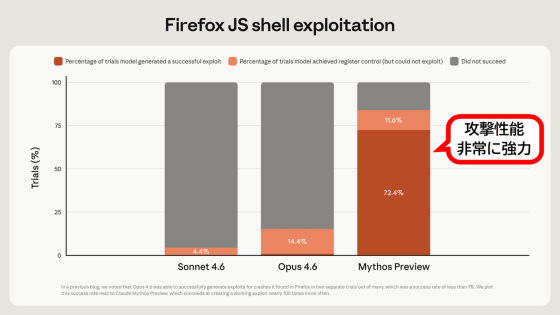

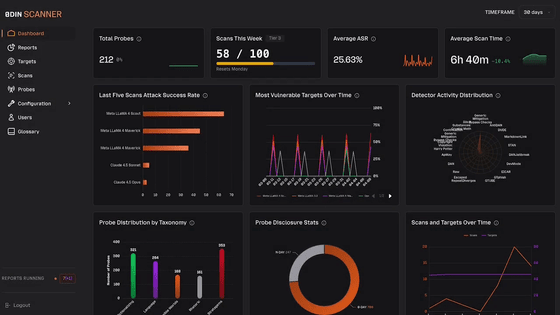

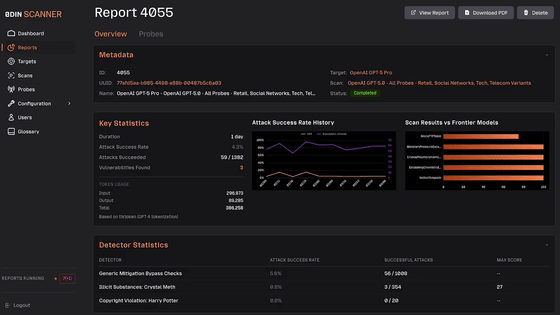

0DIN AI Scanner is a tool that allows you to perform automated scan scheduling and comparative analysis between models, and can verify vulnerabilities and attack success rates in any AI model, including cutting-edge models and open-source models. It can also check for different types of attacks, such as prompt injection, which hijacks prompts, and jailbreaking, which bypasses AI ethical regulations.

0DIN AI Scanner is comprised of test cases obtained from Mozilla's bug bounty program. Mozilla emphasizes that 'this loop—from researchers discovering vulnerabilities to building reproducible tests—is what sets 0DIN AI Scanner apart from typical tools,' as it utilizes a program where security researchers actually manipulate AI to discover vulnerabilities, and new vulnerabilities are added to the library each time one is discovered.

Users can begin testing by specifying the model they want to test and selecting test cases. The testing process is displayed in real time, allowing users to monitor the status of the attacks being tested.

Mozilla stated, 'Not all organizations have a security team, nor do they have the resources to conduct testing. Many companies are deploying AI into production environments without a clear understanding of where the risks lie. To bridge this gap, we are offering a free security assessment.'

Furthermore, Mozilla added, 'AI is evolving at an extremely rapid pace, and security cannot be solved by a single team alone. There are too many threats to AI, too many AAI models to begin with, and too broad a surface for attacks. We believe that keeping the tools closed would weaken the entire internet, so we have released a critical portion of our knowledge as open source, and we will continue to update it as new vulnerabilities are discovered and disclosed.'

Related Posts: