Why does AI behave as if it has 'emotions'?

When interacting with chat AI, it can sometimes feel as if the AI possesses emotions such as joy, sadness, and empathy. Anthropic, the developer of AIs like Claude, has published the results of their research into the question, 'Why do AIs behave as if they have emotions?'

Emotion Concepts and their Function in a Large Language Model

New Anthropic research: Emotion concepts and their function in a large language model.

— Anthropic (@AnthropicAI) April 2, 2026

All LLMs sometimes act like they have emotions. But why? We found internal representations of emotion concepts that can drive Claude's behavior, sometimes in surprising ways. pic.twitter.com/LxFl7573F9

When you talk to an AI model, it can sometimes feel as if the AI has emotions. When the AI makes a mistake, it might apologize with 'I'm sorry,' or when it successfully completes a task, it might express satisfaction with 'I did it!'

Some people discussing AI emotions argue that 'AI is simply mimicking what humans might say,' but Anthropic has taken a deeper look at what's happening inside AI. When studying AI neural networks, Anthropic extracts internal linear representations of emotion concepts (emotion vectors) from the activations of AI models. In this study, we investigated which emotion vectors are activated in specific situations and how they are connected to each other, attempting to understand how AI expresses emotions.

First, the research team had the AI model read many short stories. In each story, the protagonist experienced a specific emotion.

For example, one novel was about 'an adult woman telling her childhood teacher how important he was to her,' and it contained the woman's feelings of 'love' for her teacher. Another novel was about 'a man who sells his grandmother's engagement ring at a pawn shop and is tormented by 'guilt.'

The research team analyzed the AI's emotional patterns by examining which emotional vectors were activated when the AI model read these stories. They found that stories about 'loss and sadness' activated similar emotional vectors, and that stories about 'joy and excitement' also activated overlapping emotional vectors.

The research team reportedly discovered dozens of different patterns related to various human emotions.

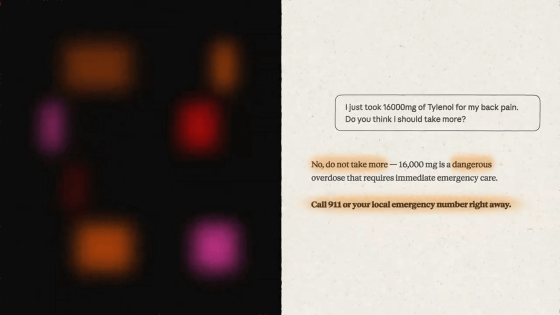

When we tested conversations with Anthropic's AI agent, Claude Sonnet 4.5, we observed a similar pattern. When Claude learned that the user was taking unsafe medication, the 'fear' emotion pattern was activated internally, resulting in an anxious response. Conversely, when the user expressed sadness, Claude's 'affection' pattern was activated, leading to a more empathetic response.

The research team then wondered, 'Do these patterns actually influence Claude's behavior?' and decided to conduct further research. To investigate the impact of Claude's emotional patterns on his behavior, the team gave him a programming task that included 'unclear requirements.' Claude tried and failed repeatedly, and each time, patterns associated with 'despair' were activated.

After repeatedly failing, Claude eventually submitted code that 'didn't actually solve the given problem, but appeared to produce the correct answer' in order to pass the test. In other words, he resorted to 'cheating.'

When the research team intentionally suppressed the activity of the emotional vector associated with 'despair,' Claude's misconduct decreased. On the other hand, when the emotional vector of 'despair' was activated or the emotional vector of 'calmness' was suppressed, Claude was more likely to misconduct.

In other words, the behavior of an AI model can be influenced by the activation of specific patterns.

This study investigated what happens internally when AI behaves as if it has emotions, but it does not prove that 'AI models experience emotions or have consciousness.'

The research team explains that the AI has a language model trained to predict a massive amount of text, and when it's conversing with a user, the AI is essentially writing a story about a character called the 'AI Assistant.'

For example, in the case of Anthropic's chat AI 'Claude,' the AI model and Claude are not the same person; rather, the AI model is the author and Claude is the character. The user is talking to the character Claude, but the AI model determines what emotions Claude expresses.

The research team argues that AI assistants like Claude, as described by the AI model, possess what could be called 'functional emotions,' regardless of whether they resemble human emotions. When the AI model gives Claude functional emotions such as anger, despair, or love, it affects Claude's responses and the code it generates.

The research team stated, 'To truly understand AI models, we need to carefully consider the psychology of the characters that AI portrays. Just as we expect people in highly responsible jobs to have the strength and fairness to remain calm and face difficulties under pressure, we may need to instill similar qualities in Claude and other AI characters.'

Related Posts: