Mistral AI has released 'Leanstral,' an open-source proof verification platform for reliable AI coding, aiming to overcome the critical bottleneck of 'human review.'

French AI startup

Leanstral: Open-Source foundation for trustworthy vibe-coding | Mistral AI

https://mistral.ai/news/leanstral

The Lean 4 Theorem Prover and Programming Language | Automated Deduction – CADE 28

https://dl.acm.org/doi/10.1007/978-3-030-79876-5_37

While AI is capable of advanced reasoning, mathematical proofs, and coding, humans still need to perform final checks and verifications to ensure that the tasks completed by AI are problem-free. However, with cutting-edge research mathematics and complex software, verification is difficult, and the time and expertise required for manual human review are the biggest obstacles to engineering speed.

Therefore, Mistral AI envisions a generation of coding agents that are more useful not only for performing tasks but also for formally proving the correctness of implementations. As part of this, they have created 'Leanstral,' the first open-source code agent designed for 'Lean 4,' a proof assistance system used in fields such as mathematical research and software verification.

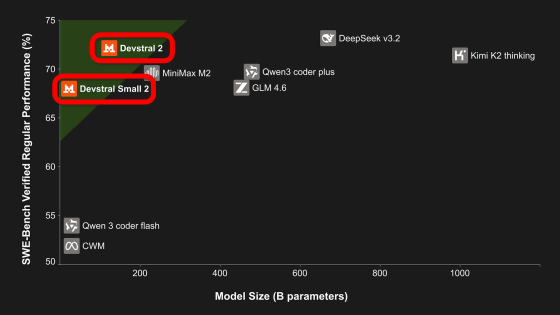

Leanstral aims to enable AI to assist in proof construction and verification, employing an architecture called Mixture-of-Experts (MoE). The MoE architecture is optimized for proof engineering tasks, and because it actively selects and computes only the necessary specialized modules, it is said to achieve both high performance and low computational cost by using only a portion of the parameters in the actual calculation, even if the total number of parameters in the model is large. By using Lean as a verifier and simultaneously generating and verifying multiple inference results, Leanstral achieves high performance and cost efficiency compared to existing closed-source competing products.

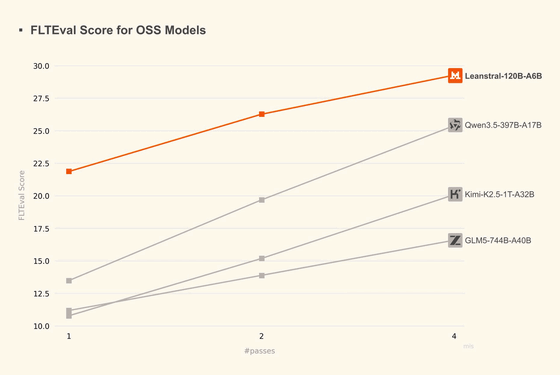

The following compares the FLTEval score, which benchmarks the completion of formal proofs and the correct definition of new mathematical concepts, with the scores of major open-source models (Qwen3.5 397B-A17B, Kimi-K2.5 1T-A32B, GLM5 744B-A40B). The horizontal axis, 'pass,' represents the number of attempts for the same problem, and the vertical axis represents the benchmark score. Even the most powerful of the competing open models, Qwen3.5 397B-A17B, reached a score of '25.4' in 4 attempts, but Leanstral achieved a better score of '26.3' in 2 attempts and extended its score to '29.3' in 4 attempts.

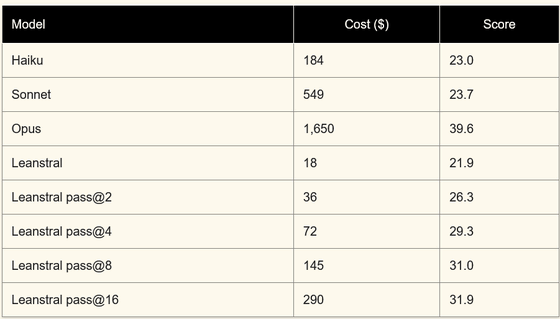

The following table compares the cost-effectiveness of major coding agents (Claude Opus 4.6, Sonnet 4.6, Haiku 4.5) with Leanstral. It shows that Leanstral requires significantly less execution cost to achieve the same score as Sonnet 4.6 and Haiku 4.5. While Claude Opus 4.6 has a very high score of '39.6', its execution cost is approximately 92 times that of running Leanstral twice, highlighting Leanstral's superior cost-effectiveness.

Leanstral is released under the Apache 2.0 license through agent mode within Mistral Vibe and a free API endpoint, allowing researchers and developers to freely use and modify it. A technical report detailing the training methodology and a new evaluation suite, 'FLTEval,' designed to move away from evaluation heavily reliant on competitive mathematics, are also planned for release.

Related Posts:

in AI, Posted by log1e_dh