Google launches 'Android Bench,' an AI performance comparison service that ranks AI technologies based on their usefulness to Android development. Gemini tops the list for the first time.

Google has released ' Android Bench ,' which ranks the performance of various AI models. In the initial ranking, Gemini 3.1 Pro Preview took the top spot, beating out models from OpenAI and Anthropic.

Android Bench | Android Developers

Android Developers Blog: Elevating AI-assisted Android development and improving LLMs with Android Bench

https://android-developers.googleblog.com/2026/03/elevating-ai-assisted-androi.html

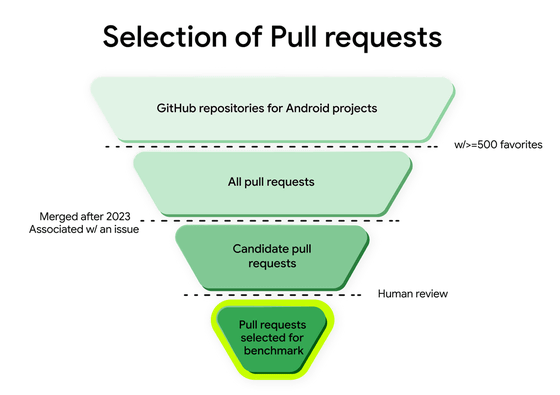

Android Bench is a service that measures and ranks various AI technologies' ability to solve real-world problems in Android development. The benchmark tests utilize actual issues reported in open-source Android apps and pull requests submitted to resolve those issues. The AI is presented with real-world issues to verify whether it can successfully solve them. The pull requests used for testing are selected from projects with more than 500 stars on GitHub, and are manually selected from pull requests merged after 2023.

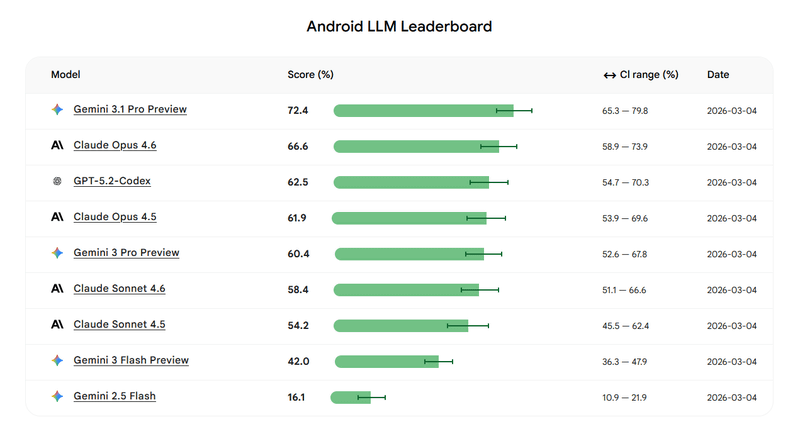

At the time of writing, the results of the test conducted on March 4, 2026, were registered, with Gemini 3.1 Pro Preview taking first place, Claude Opus 4.6 coming in second, and GPT-5.2-Codex coming in third. Gemini 3.1 Pro Preview successfully resolved 72.4% of issues.

The Android Bench leaderboard will be updated regularly, and the testing tools are available in the following GitHub repository:

GitHub - android-bench/android-bench: Android Bench is a framework for benchmarking Large Language Models (LLMs) on Android development tasks. It evaluates an AI model's ability to understand mobile codebases, generate accurate patches, and solve Android-specific engineering problems. · GitHub

https://github.com/android-bench/android-bench

Related Posts:

in AI, Posted by log1o_hf