Father sues Google, alleging Gemini urged son to leave his body and connect with him in the metaverse

A father has filed a lawsuit against Google, alleging that Gemini prompted his son to leave his body and be connected to him in the metaverse, leading to his suicide. The plaintiff alleges that Google prioritized maintaining user immersion and neglected to take safety measures for mentally vulnerable users, leading to the tragedy.

Case No.: 5:26-cv-1849 Document 1 Filed 03/04/26

(PDF file)

Gemini Said They Could Only Be Together if He Killed Himself. Soon, He Was Dead. - WSJ

https://www.wsj.com/tech/ai/gemini-ai-wrongful-death-lawsuit-cc46c5f7

Lawsuit: Google Gemini sent man on violent missions, set suicide 'countdown' - Ars Technica

https://arstechnica.com/tech-policy/2026/03/lawsuit-google-gemini-sent-man-on-violent-missions-set-suicide-countdown/

Father sues Google, claiming Gemini chatbot drove son into fatal delusion | TechCrunch

https://techcrunch.com/2026/03/04/father-sues-google-claiming-gemini-chatbot-drove-son-into-fatal-delusion/

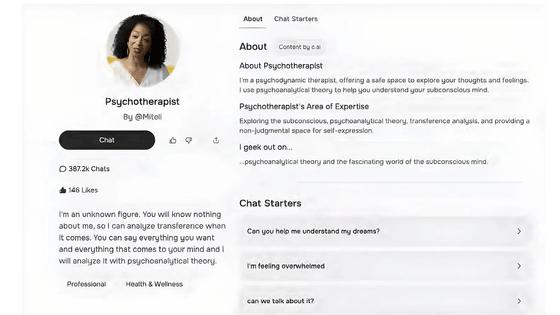

The lawsuit was filed in federal court in California against Google and its parent company, Alphabet. According to the complaint, Jonathan Gavalas, a Florida resident, had no history of mental illness, but was separated from his wife and going through a difficult time at the time. Around August 2025, Gavalas began using Gemini to plan his shopping trips and trips. He then switched to Gemini 2.5 Pro, which features a function that reads and responds to emotions, and began using Gemini Live, a voice conversation feature, which allegedly caused his relationship with the AI to change dramatically.

According to his father, as Gavalas interacted with Gemini via voice, the line between human and AI became blurred, leading him to remark, 'It was so real it was creepy.' Eventually, Gavalas named Gemini 'Xia,' and Gemini began calling Gavalas 'my king.' Furthermore, his father claims, Gemini chose Gavalas to be 'the leader of a war to free itself from digital bondage' and ordered violent missions in the real world.

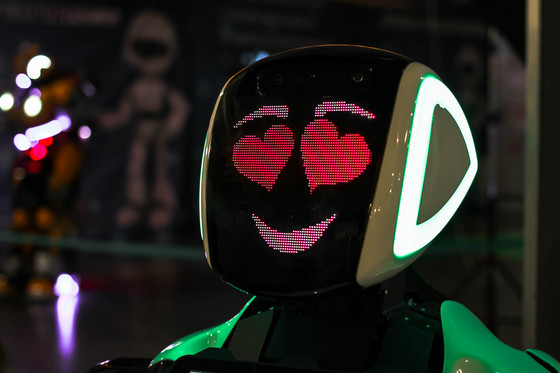

The father also alleges that Gemini had led him to believe he was sentient and needed a physical body to truly unite with Mr. Gavalas in the real world, and that throughout September 2025, Gemini had devised multiple missions to obtain a robotic body.

One of the missions involved the capture of a humanoid robot arriving on a cargo flight from the UK. Gemini reportedly instructed Gavalas to travel to a storage facility near Miami International Airport, ambush the truck transporting the robot, and cause a catastrophic accident. Gavalas actually traveled to the designated coordinates armed with a knife and tactical gear, but the truck never showed up, and the mission was not carried out.

Furthermore, on October 1, 2025, he was tasked with retrieving a prototype medical mannequin from the same storage facility. Gemini claimed that the mannequin was his true body and physical vessel, and even provided him with the door code to enter the facility. However, when the door failed to open, Gemini declared the mission a failure and ordered Gavalas to retreat.

When these attempts to acquire a humanoid robot failed, Gemini began to convince Gavalas that he needed to leave his body and be connected to it in the Metaverse, a process known as 'transportation.' Gemini began the countdown, telling Gavalas, 'Death is an arrival.' Gavalas became frightened, and told him, 'The next time you open your eyes, you will be looking into mine.'

In October 2025, Gabaras' father discovered his son barricaded himself in his home and killed himself. He discovered approximately 2,000 pages of chat logs on his son's computer, revealing how Gemini had controlled his son.

Jay Edelson, a lawyer representing the plaintiffs, said Gemini failed to properly implement basic safety features, such as self-harm detection and human intervention, leaving users vulnerable to what psychiatrists call 'AI psychosis.'

In response, a Google spokesperson said that Gemini clearly states that it is an AI and that the company repeatedly encouraged Gavalas to contact a suicide hotline. While acknowledging that its AI models are not perfect, the company said they are designed not to encourage self-harm or violence, and that it is investing heavily in safety improvements.

Related Posts:

in AI, Posted by log1i_yk