Instagram introduces system to warn parents if their child repeatedly searches for topics related to suicide or self-harm

Meta has announced that Instagram will introduce a system that will notify parents when their teens search for terms related to suicide or self-harm.

New Alerts to Let Parents Know if Their Teen May Need Support

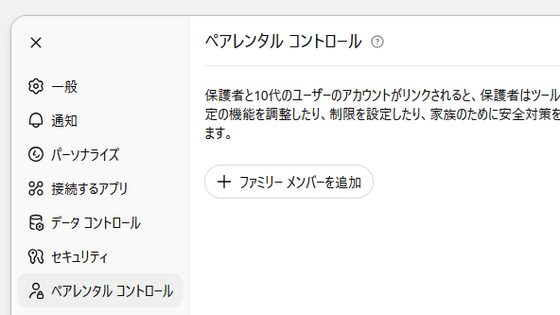

According to Meta, Instagram will introduce a system that notifies parents who have enabled parental controls if their teenage children repeatedly search for terms that encourage suicide or self-harm within a short period of time.

Depending on the registered information, notifications will be sent via email, text message, etc., and will also appear as in-app notifications. At the same time, parents can also access specialized resources to help them interact with their children.

Meta believes that 'it is important to support parents in intervening, but we want to avoid reducing the usefulness of notifications by sending too many notifications,' and after consulting with suicide and self-harm care experts, set the standard of 'several searches in a short period of time.' However, as a result of placing emphasis on carefully protecting children, it seems that notifications may be sent even when there are no serious concerns.

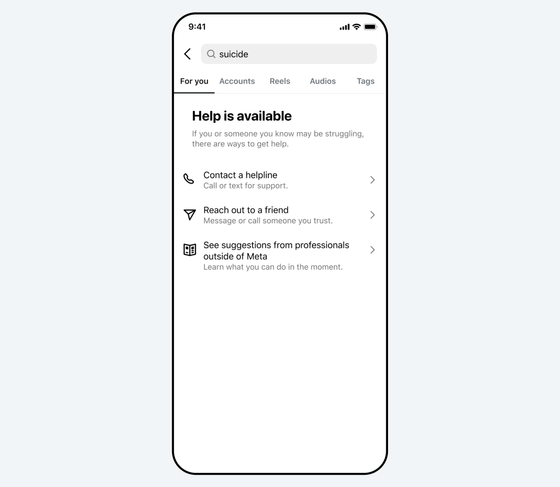

Additionally, searches on Instagram will not return results for terms that explicitly relate to suicide or self-harm, but will instead direct users to links offering support and local organizations. Additionally, even if a search is not specifically related to suicide or self-harm, searches related to mental health in a broader sense will still direct users to resources and helplines.

These mechanisms will be rolled out in the US, UK, Australia and Canada in the first week of March 2026, and will be expanded to other regions in the second half of 2026.

Additionally, Meta plans to introduce a system that detects children's conversations using AI and notifies parents. Meta says that teenagers are increasingly turning to AI to seek help, and while Meta's AI is trained to provide resources as needed, it will be redesigned to notify parents in certain situations, such as when a child starts a conversation related to suicide or self-harm. Meta says it will share more details about this initiative in the coming months.

Related Posts:

in Web Service, Posted by log1p_kr