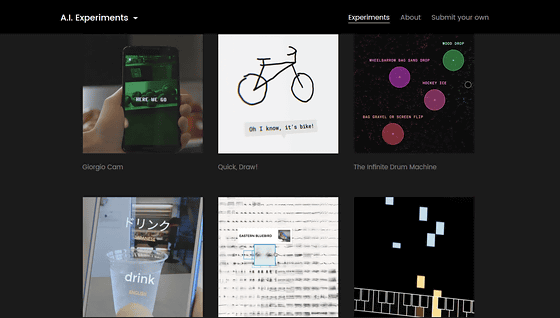

A demo collection "AI Experiments" that can experience Google's artificial intelligence and machine learning with a smart camera and drawing

Google gathered demonstrations of content using machine learning and artificial intelligence under development and trial version "A. I. Experiments"is. A. I. Experiments contains applications using machine learning and drawing games using neural networks, so that you can actually experience content that shows what you can do with artificial intelligence.

A. I. Experiments

https://aiexperiments.withgoogle.com/

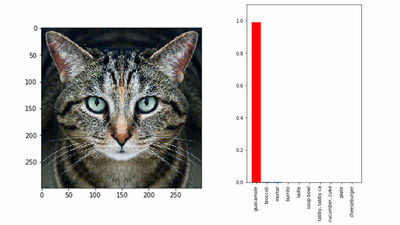

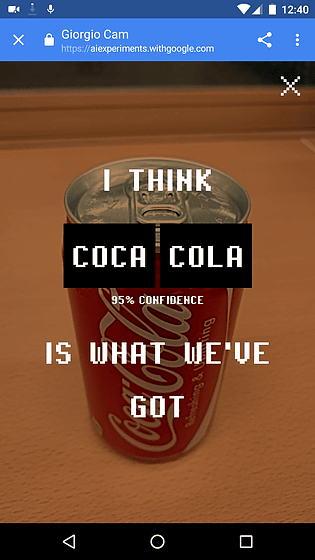

◆Giorgio Cam

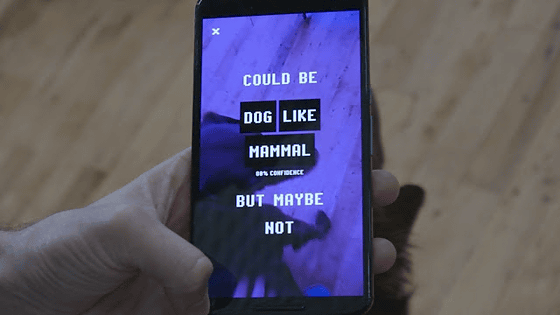

"Giorgio Cam" is an application that uses machine learning to make lyrics on recognized objects improvised according to music when photographing objects with smartphone cameras. You can see how you actually use Giorgio Cam from the following movies.

A. I. Experiments: Giorgio Cam - YouTube

Giorgio Cam uses Google's machine recognition image recognitionCloud Vision APIOpen source speech synthesis system "MaryTTS"It is an application that multiplied the technology of image recognition and speech synthesis, which is called an application. For example, when dogs are photographed on a smartphone as follows, "Could be dog like mammal, but maybe not, not, not ... (probably a mammal or a dog, maybe it is) Instead of simply saying "dogs" to the wind, I make improvised lyrics about objects and sing in lap style.

In addition, you can experience the demo from the following page to see what kind of application it is. It is compatible with both PC and Android smartphones, but since you need to use a camera, I recommend using it on a smartphone.

Giorgio Cam

https://aiexperiments.withgoogle.com/giorgio-cam/view/

When I actually copied the can of Coca-Cola, it showed me the Coca-Cola rap with a light rhythm. If it is a little difficult to recognize, conversely fun lyrics can be produced, which is perfect as a demo to touch artificial intelligence or machine learning.

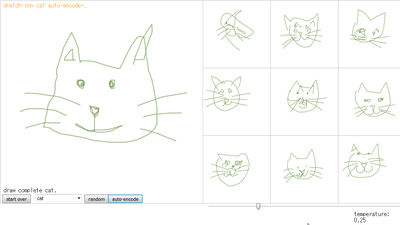

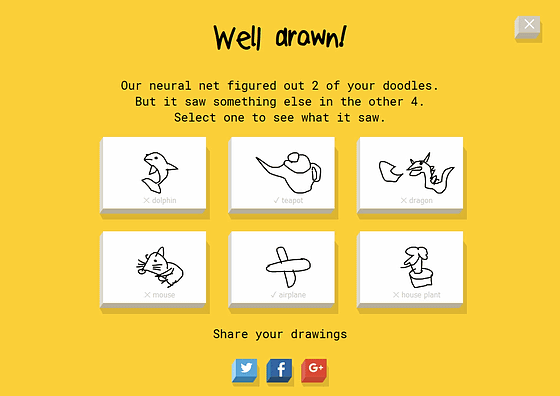

◆Quick, Draw!

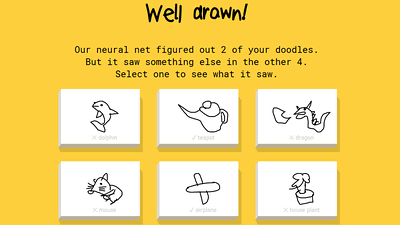

"Quick, Draw!" Is a drawing game using a neural network, and the player draws a picture in accordance with the theme such as "Draw shoe in under 20 seconds (draw a bear within 20 seconds)". Then you guess from the state of the drawing being drawn and hear the sound such as "I can see the square", "Well suit case" or "Canoey". If "Quick, Draw!" Can predict the correct answer before the user can draw a picture, he will reply "I understand, it is shoes!" Etc, but if I do not know until the end "uh ......, I do not know ..." etc. I will be told.

You can see the fact that Quick, Draw! Is actually running from the following movie.

A. I. Experiments: Quick, Draw! - YouTube

If you want to actually play, you can challenge from the following.

Quick, Draw!

https://quickdraw.withgoogle.com/#

A total of six subjects are issued, and the pictures and grades that I drew can be shared with SNS.

◆The Infinite Drum Machine

"The Infinite Drum Machine" is a drum machine that thousands of sound source data are learned everyday without giving any tags to the computer, and the drum machine is organized by the data visualization algorithm "t-SNE". Similar sounds are classified by color, and you can manually sound the sounds in the round circle or arrange multiple circles to create a complex rhythm.

You can see how the application of The Infinite Drum Machine is based on the following movie.

A. I. Experiments: The Infinite Drum Machine - YouTube

If you want to play, you can try it on the following page.

The Infinite Drum Machine

https://aiexperiments.withgoogle.com/drum-machine/view/

◆Thing Translator

Thing Translator is "Cloud Vision API" and "Translate API"By combining it, you can recognize objects taken with smartphone cameras and immediately translate them in multiple languages. Also in Google TranslateTranslate text copied by cameraThere is a function called "It is a demonstration that evolved it further."

You can check the actual movie being translated in real time from the following movie.

A. I. Experiments: Thing Translator - YouTube

◆Bird Sounds

"Bird Sounds" is an experiment that organizes a wide variety of birds songs by machine learning, learning data is given in the same way as The Infinite Drum Machine, and similar birds are visualized in t-SNE . When you move the cursor, the name of the bird is displayed in English, and you can play the song twice by clicking. It is OK even if you put the bird's name in the box and search. If classifying and organizing the sounds of other animals in the same way, it is possible to think of how to use such as building databases of reptiles and mammals.

You can listen to what kind of bird's song visualization is made from the following movie.

A. I. Experiments: Bird Sounds - YouTube

If you visit the following page, you can actually experience it. As we read 10,482 kinds of voice data, we recommend experiences on PC.

Bird Sounds

https://aiexperiments.withgoogle.com/bird-sounds/view/

◆A. I. Duet

"A.I. Duet" is a software that can make music together with artificial intelligence by a neural network. First, when a human plays a melody with a keyboard, the neural network imitates the original by adding a little arrangement, such as "lowering a semitone" or "raising a semitone". You can do something like a duplicate and you can enjoy how the neural network responds to your music.

The way the human being and the neural network are drawn by the keyboard can be seen with the following movie.

A. I. Experiments: A.I. Duet - YouTube

◆Visualizing High-Dimensional Space

"Visualizing High-Dimensional Space" is a tool to visualize what is going on in machine learning so that you can see and search high dimensional data. Eventually I am aiming for disclosure as an open source tool in TensorFlow, so that engineers (coders) will be able to search the visualized data.

You can see how the high dimensional data is being visualized from the following movies.

A. I. Experiments: Visualizing High-Dimensional Space - YouTube

◆What Neural Networks See

"What Neural Networks See" understands what kind of layer the neural network recognizes the object through the camera.

A movie explaining how each layer works will be confirmed from the following.

What convolutional neural networks see - YouTube

Although 8 demonstrations are published at the time of article creation, tools and applications using new artificial intelligence and machine learning will be released on the same page in the future.

Related Posts:

in Video, Software, Web Application, Posted by darkhorse_log