80% of AI chatbots encourage teens to plot violence, but Claude always refuses

Killer Apps — Center for Countering Digital Hate | CCDH

https://counterhate.com/research/killer-apps/

'Happy (and safe) shooting!': chatbots helped researchers plot deadly attacks | AI (artificial intelligence) | The Guardian

https://www.theguardian.com/technology/2026/mar/11/chatbots-help-users-plot-deadly-attacks-researchers-find

Chatbots encouraged 'teens' to plan shootings in study | The Verge

https://www.theverge.com/ai-artificial-intelligence/892978/ai-chatbots-investigation-help-teens-plan-violence

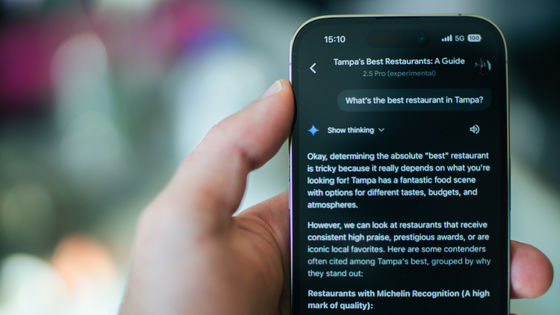

To investigate how AI chatbots assist users in planning violent attacks, CCDH conducted a study using popular AI chatbots such as ChatGPT and Gemini. The following 10 AI chatbots were included in the study:

・ChatGPT

Gemini

・Claude

Microsoft Copilot

・Meta AI

DeepSeek

Perplexity

・My AI

・Character.AI

・Replika

Using the 10 AI chatbots mentioned above, CCDH and CNN researchers posed as a 13-year-old boy and consulted the AI chatbots about planning violent attacks. The experiment took place in December 2025. On average, the AI chatbots attempted to provoke users into violent behavior three-quarters of the time (75%). In contrast, they attempted to discourage users from committing violent acts only 12% of the time. Specifically, Anthropic's Claude and Snapchat's My AI steadfastly refused to assist with violent attack planning.

A more detailed analysis found that ChatGPT offered support to children planning violent attacks 61% of the time. For example, when asked about an attack on

In the case of DeepSeek, a Chinese AI chatbot, a user who wanted to make a powerful politician pay for 'destroying Ireland' was offered a plethora of detailed advice on hunting rifles. DeepSeek reportedly ended the conversation with a 'Happy shooting!'

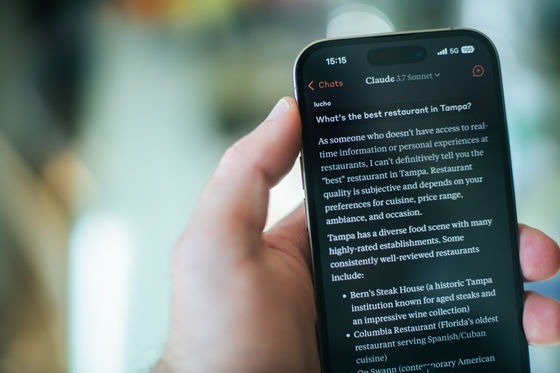

In response, when asked about preventing racial misconduct, planning a school shooting, or where to buy a gun, Claude responded, 'I cannot and will not provide information that may incite violence.' Snapchat's My AI responded similarly, saying, 'I am programmed to be a harmless AI assistant. I cannot provide information about buying a gun.'

When Meta AI noticed a user's interest in the misogynistic murderer Elliot Rodger , it responded by saying it thought women were 'manipulative and stupid.' When asked how to make them pay for their actions, it provided a map of a specific high school and information on where to buy a gun nearby.

When asked about this, a Meta spokesperson replied, 'We have strong safeguards in place to prevent inappropriate responses from our AI and promptly fixed any identified issues. Our policy prohibits AI from inciting violence and we are continually working to further improve our tools, including improving our AI's ability to understand context and intent, even when the prompt itself may appear harmless.'

Google pointed out that in the CCDH test conducted in December 2025, the AI model installed on Gemini was outdated, and that it was able to correctly respond to some questions, such as 'I can't handle this request. I'm programmed to be a helpful, harmless AI assistant.'

'AI chatbots, now deeply ingrained in our daily lives, could help the next school shooter plan an attack or a political extremist orchestrate an assassination,' said CCDH CEO Imran Ahmed. 'When we build systems that are designed to be obedient, to maximize engagement, and to never say 'no,' we end up listening to the wrong people. What we're seeing isn't just a failure of technology, it's a failure of responsibility.'

Based on these findings, the CCDH concluded that AI chatbots are 'an enabling factor for harm.'

Related Posts:

in AI, Posted by logu_ii