AI didn't break copyright law; it just exposed a system that was broken from the start.

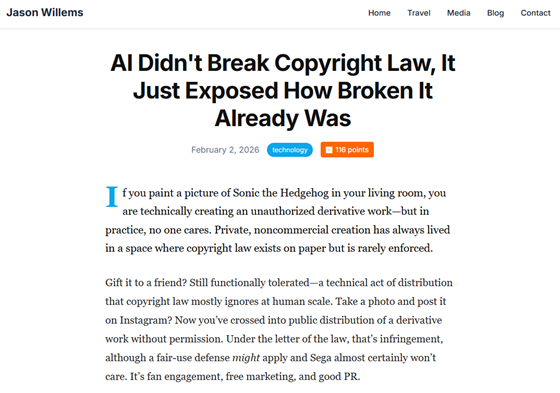

Copyright issues surrounding generative AI have been repeatedly debated, including whether it's acceptable to use copyrighted work as training data and who is responsible if the output resembles a copyrighted work. Tech blogger Jason Willems points out in his blog that copyright law has always relied on the premise that it applies at a human scale, and that generative AI simply undermines that premise, exposing ambiguity.

AI Didn't Break Copyright Law—It Just Exposed How Broken It Already Was | Jason Willems

https://www.jasonwillems.com/technology/2026/01/23/AI-Copyright/

Willems gives the example of 'drawing a picture of Sonic the Hedgehog at home.' While drawing and viewing it privately is unlikely to be a problem, posting it on social media could be considered 'public distribution of unauthorized derivative works.' Copyright enforcement has long been fraught with gray areas and tacit acceptance.

But because generative AI can create content in massive quantities at almost zero cost, the ambiguity that was previously limited to humans suddenly becomes a huge stake, and the tacit system can no longer tolerate it, Willems points out.

Willems points out that the difficulty of the debate becomes clear when one considers the stage at which generative AI should be regulated. First, while the idea of prohibiting training on data containing copyrighted material seems straightforward at first glance, there are a large number of references and fragments of copyrighted material on the internet that could potentially fall under fair use , such as criticism, parody, and news reports.

Even if the AI model only learns from legally publicly available information, the character's appearance and characteristics may still be included in the model, making it difficult to define 'completely clean learning.' Furthermore, when the amount of training data is huge, it is not realistic to later rigorously prove what has been learned.

Second, Willems points out that when censoring at the creation stage, determining the creator's intent is prone to failure. It's difficult to mechanically distinguish between whether a human intentionally created something that resembles a copyrighted work, or whether vague instructions created something that resembles a copyrighted work by chance. This creates a cat-and-mouse game of banned words and filters.

Furthermore, Willems points out that copyright statutory damages are designed on the assumption that 'people will occasionally infringe.' If we apply this to a world where content can be mass-produced cheaply, it would theoretically lead to unrealistic damages, undermining the legitimacy of the system.

Originally, copyright has had a strong impact on the distribution and publication stages. While there is a difference between creating and keeping content privately and posting it on YouTube and spreading it around, Willems argues that damages such as market substitution and brand damage generally occur after content is distributed, i.e., when it is posted and spread.

However, if responsibility is placed on the distribution stage, the platform on which the content is posted could be overwhelmed by the influx of AI-generated content, and conversely, there would be increased pressure to shift responsibility back to the generative model. This could ultimately lead to a debate about who should be held responsible, Willems says.

Furthermore, even if regulations are put in place in the United States, the effectiveness of such regulations may be limited because AI models are distributed across borders. Willems believes that strict regulations could hinder domestic companies in the United States, and if use shifts to overseas or open-source AI models, a two-tiered structure of 'safe commercial AI' and 'unauthorized AI' may develop.

Willems also suggests alternatives such as charging and distributing funds to AI companies through a system similar to compensation, or a blanket license system similar to compulsory licensing. However, he says that the management costs of determining how much money to distribute to each person and at what stage to charge are likely to explode, and that ultimately, none of these proposals are likely to be decisive.

Ultimately, Willems pointed out that the problem isn't just how to protect existing content, but that content itself is beginning to change from a fixed object to an experience that is generated on the fly. For example, when a news article is no longer a single, static page but becomes a product whose length and tone change depending on the reader, the very premise of where copyright originates and what needs to be preserved and proven becomes unstable.

Furthermore, Willems points out that current debates over whether it's okay to use copyrighted work in training data, or who should be held responsible if the output resembles a copyrighted work, may simply be an attempt to adapt traditional frameworks to a changing reality.

This point has also been discussed on the social news site Hacker News , with some saying, 'The reason why engineers, who have been critical of copyright in the past, now seem to be concerned about copyright infringement when it comes to AI, is not because of a change in position, but because large companies have been criticized for harming society, whether they use copyright as a shield or ignore it.' Another commenter cited Google Books ' book scanning service as an example, saying , 'The tech industry has been pushing the boundaries of copyright with its interpretations at the time, so generative AI seems to be in the same situation.'

Related Posts:

in AI, Posted by log1b_ok