`` BodyPix '' that can extract people in real time from the video being shot and recognize each part

In the “segmentation” field, which recognizes people and specific objects in

The TensorFlow Blog: [Updated] BodyPix: Real-time Person Segmentation in the Browser with TensorFlow.js

https://blog.tensorflow.org/2019/11/updated-bodypix-2.html

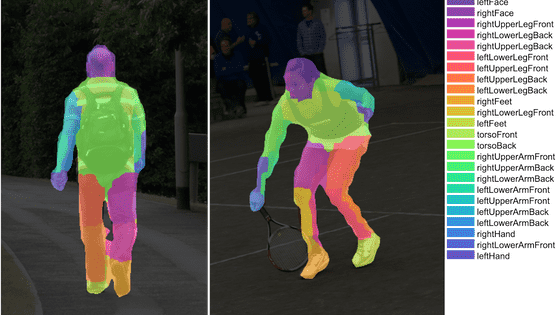

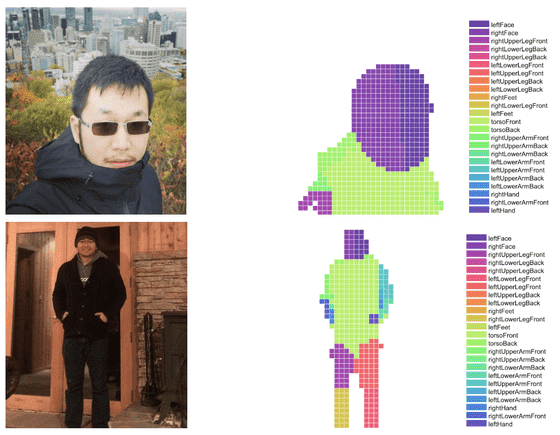

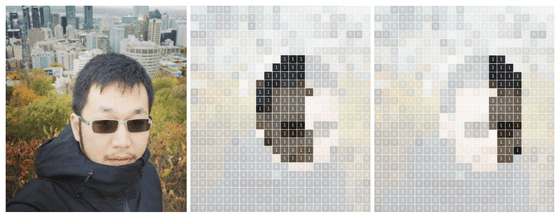

This is an image that recognizes a person using “BodyPix” developed by the research team of the Interactive Telecommunications Program at New York University. In addition to the head, torso, and limbs, the human body can be color-coded by up to 24 parts, such as distinguishing left and right limbs.

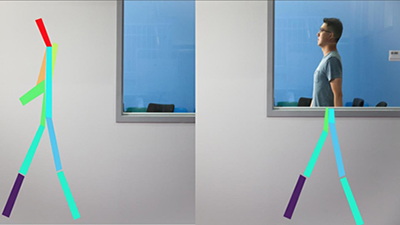

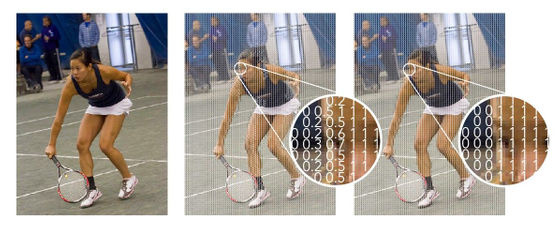

BodyPix segmentation is roughly divided into two stages. In the first step, “Distinguish between person and background”, the BodyPix algorithm first analyzes the video in pixels and evaluates the possibility that each pixel is part of a person with a score from 0 to 1. After that, determine the score threshold that should be regarded as a person from the whole, and extract the person with 0 or less being the value and 1 being the others.

In the next 'Distinction of body parts', we will determine which part each pixel belongs to in the same process as above. For example, in the image below, the leftmost original image is being evaluated for each 'right half of the face' and 'left half of the face'.

In this way, the video is divided into 24 parts.

At the beginning of the February 2019 release, only one person could be processed, but with the November update, multiple persons can now be recognized in real time.

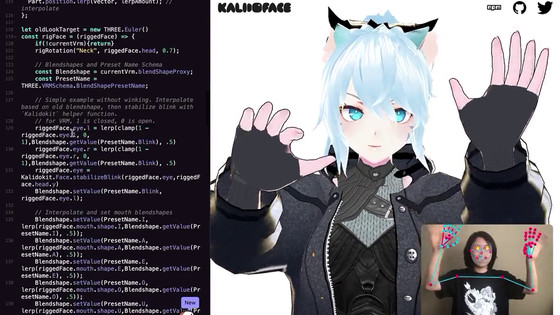

One of the main features of BodyPix is that it does not require special equipment and can be operated in real time with a web camera or smartphone camera attached to the notebook PC. “BodyPix” itself is also published on GitHub, and since it is not processed by a server somewhere via the cloud, it works with one PC or smartphone.

In the past, the research team has developed “ PoseNet ” that can recognize human postures in real time.

“With BodyPix and PoseNet, you can easily capture motion in the outdoors, not in the studio, with BodyPix and PoseNet,” says Dan Oved, Interactive Telecommunications Program.

Related Posts:

in Software, Posted by log1l_ks