What is the basic principle that produces colorful images from the sequence of 0 and 1?

by

With the evolution of smartphones and cameras, anyone can easily shoot high-quality movies and edit them easily at home. TJ Krusinski , a software engineer and video producer on Facebook, explains in a blog how digital data, not films, are in the form of video.

A Mental Model for Video

https://tjkrusinski.com/articles/general/mental_model

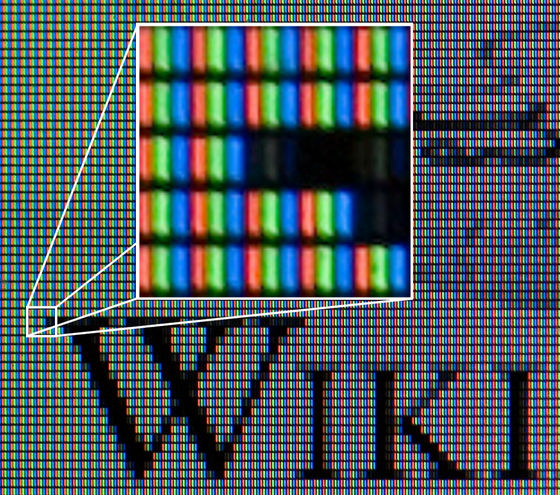

The image is a sequence of still images, and the smallest unit that composes one frame is the ' pixel .' For example, 1080p of “ 1080p ”, which is a full HD video standard, has 1080 pixels arranged in the vertical direction and 1920 pixels in the horizontal direction. In other words, a full HD video frame consists of 2,073,600 pixels in total. The more pixels there are, the higher the resolution.

by

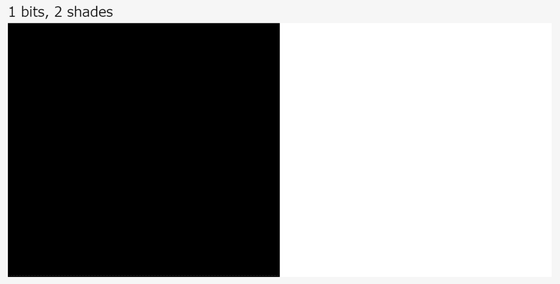

Pixels contain numerical values that indicate color information such as chromaticity and luminance in addition to coordinates on the screen, and the wider the numerical value is, the wider the range of shades and colors can be represented. For example, in the case of monochrome images, when the pixel information is 1 bit, that is, only 0 or 1, the shade is only white or black as follows.

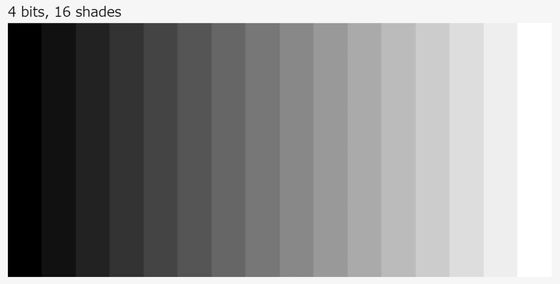

When it comes to 4 bits, it can express 2 4 = 16 levels of gradation.

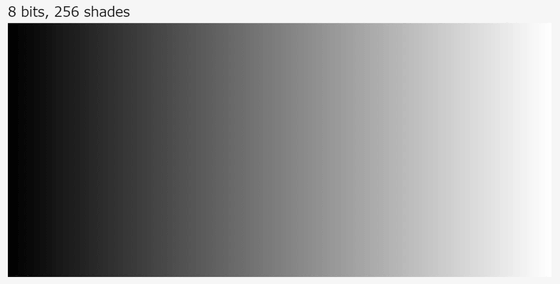

And when it comes to 8 bits, 2 8 = 256 levels of gradation can be expressed. The number of bits of information contained in a pixel is called '

On the other hand, RGB color images are expressed by mixing the three primary colors R (red), G (green) and B (blue). Since R, G, and B can represent the chromaticity in 256 steps, 256 colors × 256 colors × 256 colors = 1677, 7216 colors can be created per pixel. Of course, as the bit depth increases to 10 bits and 24 bits, the color depth increases, but the color that human vision can distinguish is said to be about 10 million types to 100 million types, and it can produce over 1 billion colors 10 There is little need to find a color depth even higher than the bit color.

by Wikimedia Commons

One frame of pixels is called a 'frame'. And 'frame rate' is what shows how many frames are continuous per second. Typical television broadcasts are around 30 fps (frames per second) and 60 fps, in some regions such as Europe 50 fps and 24 fps for movies. It can not be visually counted how many images are displayed per second for ordinary people, but even if the frame rate is different, the movement in the picture looks completely different.

If you look at the movies below comparing the animations that perform the same motion from the left at 15 fps, 30 fps, 60 fps and 120 fps, you can clearly see how much the difference in motion will occur if the frame rate is different. The higher the frame rate, the more slick it looks, but the higher the frame rate the better. About 24 fps to 30 fps is said to be the most natural frame rate for humans.

Framerate Comparison 15/30/60/120 fps-YouTube

Also, as a method of displaying video frames, there is a method called 'progressive (p)' in which all the frames are displayed one by one, and a method called 'interlace (i)' in which two frames are combined and displayed at one time To do. Interlacing is produced to send high-resolution images even in analog broadcasting, because the amount of data is reduced to about half, but it is a sword with a sharp edge that makes it easier for flicker and noise to appear on the screen. In recent years, progressive technology has become the mainstream rather than interlace technology due to advances in video technology and communication technology. For example, the full HD standard 1080p 'p' means progressive.

Mr. Krsinski states that what he has described is only part of the principle of the film and is actually more complicated. And “The picture is finally the representation of light by numerical values, and it is necessary to handle the picture efficiently by knowing how these numerical values are arranged and what they constitute. I can do that. '

Related Posts:

in Video, Posted by log1i_yk